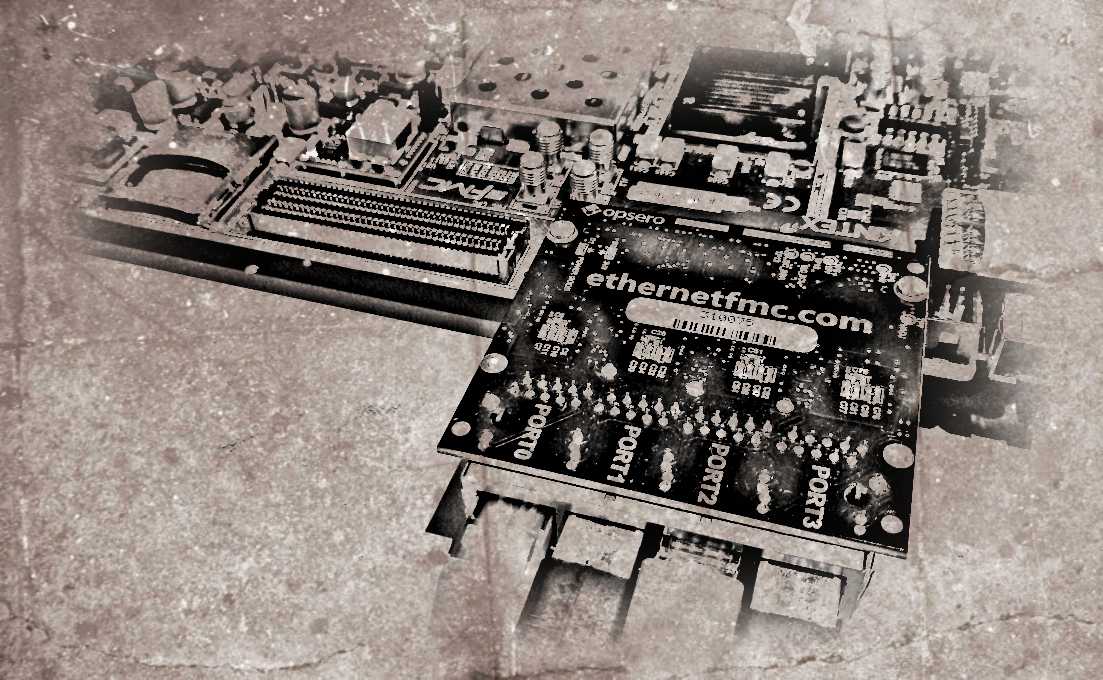

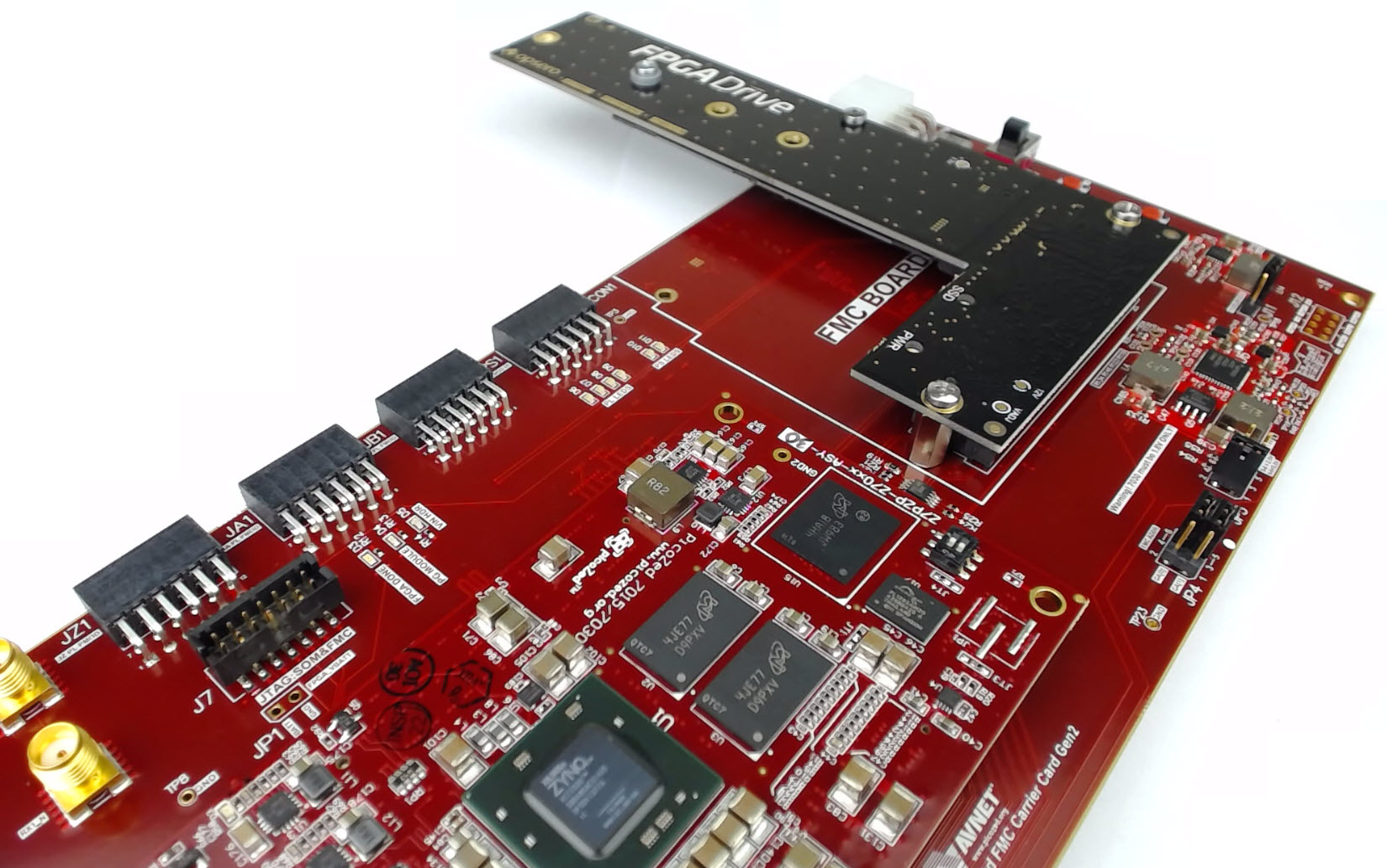

For those of you who want to experiment with processorless Ethernet on FPGAs, I’ve just released a 4-port example design that supports these Xilinx FPGA development boards:

- Artix-7 AC701 Evaluation board

- Kintex-7 KC705 Evaluation board

- Kintex Ultrascale KCU105 Evaluation board

- Virtex-7 VC707 Evaluation board

- Virtex-7 VC709 Evaluation board

- Virtex UltraScale VCU108 Evaluation board

- Virtex UltraScale+ VCU118 Evaluation board

Here’s the Git repo for the project: Processorless Ethernet on FPGA

Why processorless?

Pure hardware designs can trump software where the need for low latency and/or high throughput is greater than the need for flexibility and complexity (eg. the support of complex protocols). There are lots of applications that rely on hardware based packet processing to achieve their superior performance. High frequency trading platforms are often fed market pricing over multicast UDP, so their profitability is directly linked to their ability to process UDP with the lowest possible latency. Network security devices that monitor traffic usually need to be as transparent as possible while also being able to detect threats and take action with the lowest possible delay. Whatever your reason for processing Ethernet frames in the FPGA fabric, make sure that you consider both sides of the coin:

[Read More]