One of my most common customer requests is to speed up execution of a software application using FPGA hardware acceleration. If the application runs on a PC or server, you can achieve impressive performance gains by using off-the-shelf FPGA development boards for PCI Express.

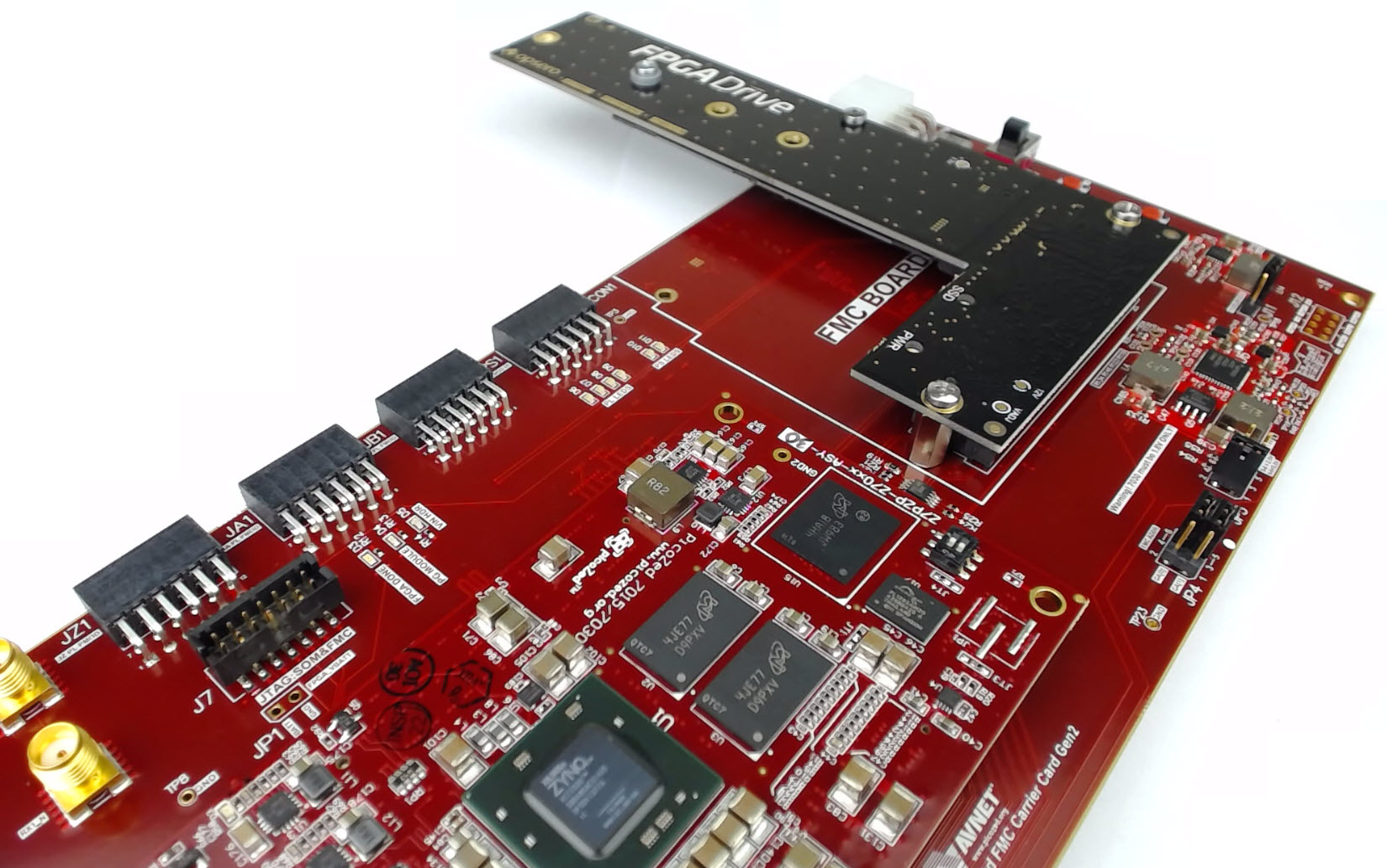

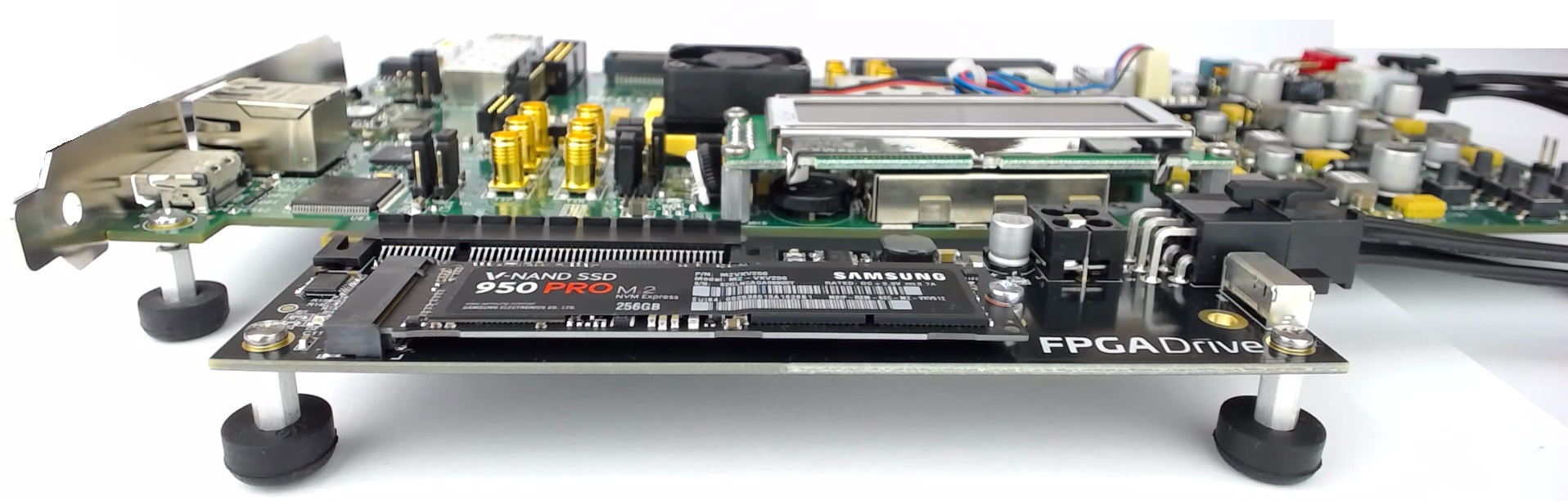

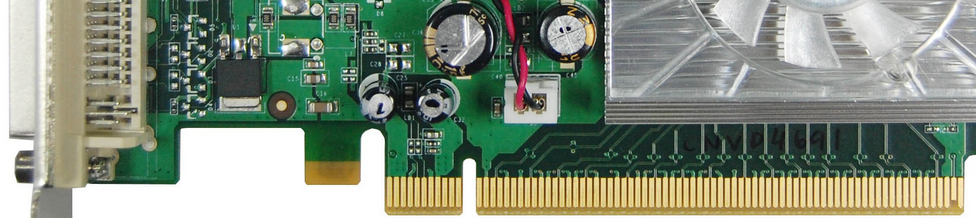

Here is a comparison of the available 7 Series FPGA boards for PCI Express applications:

| AC701 Artix-7 |

KC705 Kintex-7 |

VC707 Virtex-7 |

VC709 Virtex-7 |

| $1295 |

$1695 |

$3495 |

$4995 |

| XC7A200T-2FBG676C |

XC7K325T-2FFG900C |

XC7VX485T-2FFG1761 |

XC7VX690T-2FFG1761C |

| 4-lane Gen2 PCIe |

8-lane Gen2 PCIe |

8-lane Gen2 PCIe |

8-lane Gen3 PCIe |

| 1GB DDR3 SODIMM |

1GB DDR3 SODIMM |

1GB DDR3 SODIMM |

4GB DDR3 SODIMM x2 |

| 8Kb EEPROM |

8Kb EEPROM |

8Kb EEPROM |

1KB EEPROM |

| No BPI Flash |

128MB BPI Flash |

128MB BPI Flash |

32MB BPI Flash |

| 32MB Quad SPI |

16MB Quad SPI |

16MB Quad SPI |

No Quad SPI Flash |

| SD Card slot |

SD Card slot |

SD Card slot |

No SD |

| No LPC FMC |

1x LPC FMC |

No LPC FMC |

No LPC FMC |

| 1x HPC FMC (*) |

1x HPC FMC (*) |

2 x HPC FMC |

1x HPC FMC (*) |

| 1x SFP |

1x SFP+ |

1x SFP+ |

4x SFP/SFP+ |

| 1GB Ethernet |

1GB Ethernet |

1Gb Ethernet |

No Ethernet |

| No USB |

No USB |

No USB |

No USB |

| UART over USB |

UART over USB |

UART over USB |

UART over USB |

| HDMI out |

HDMI out |

HDMI out |

No Video |

| XADC header |

XADC header |

AMS port |

No Analog |

- (*) Note: These HPC FMC connectors are only partially populated which means that they wont be able to support all standard FMCs.

- There are many more FPGA boards for PCIe on the market, but I chose to limit the comparison to those that are more strongly supported by Xilinx.

The reason these types of boards are so useful in the hardware acceleration space is because PCI Express is the highest bandwidth,

lowest latency link that you can have between a PC’s CPU and an external FPGA. There’s no use shipping off work to an FPGA if the

time it takes the data to get there and back is more than the time saved through improved processing efficiency.

[Read More]