Update 2020-02-07: Missing Link Electronics has released their NVMe Streamer product for NVMe offload to the FPGA, maximum SSD performance, and they have an example design that works with FPGA Drive FMC!

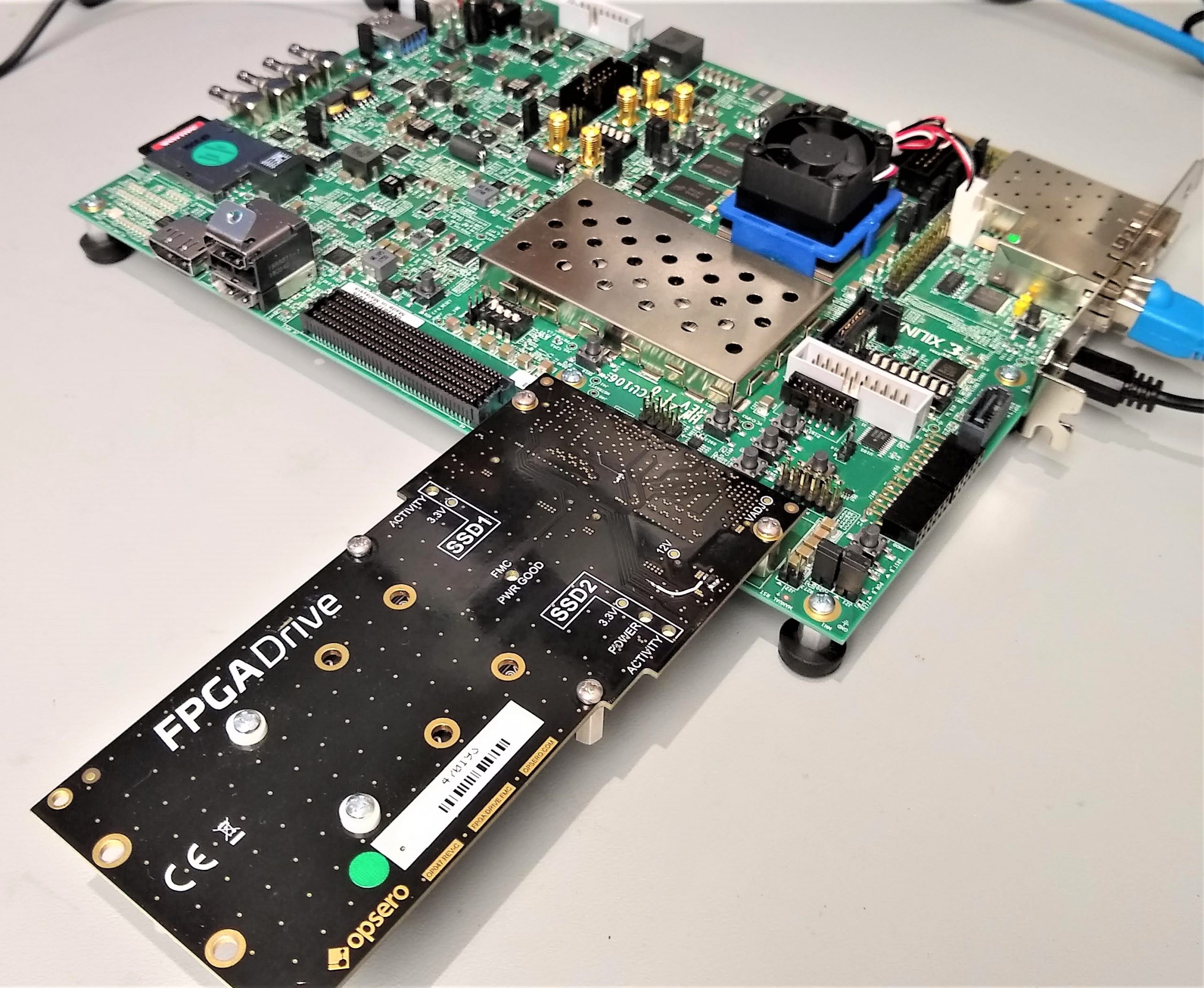

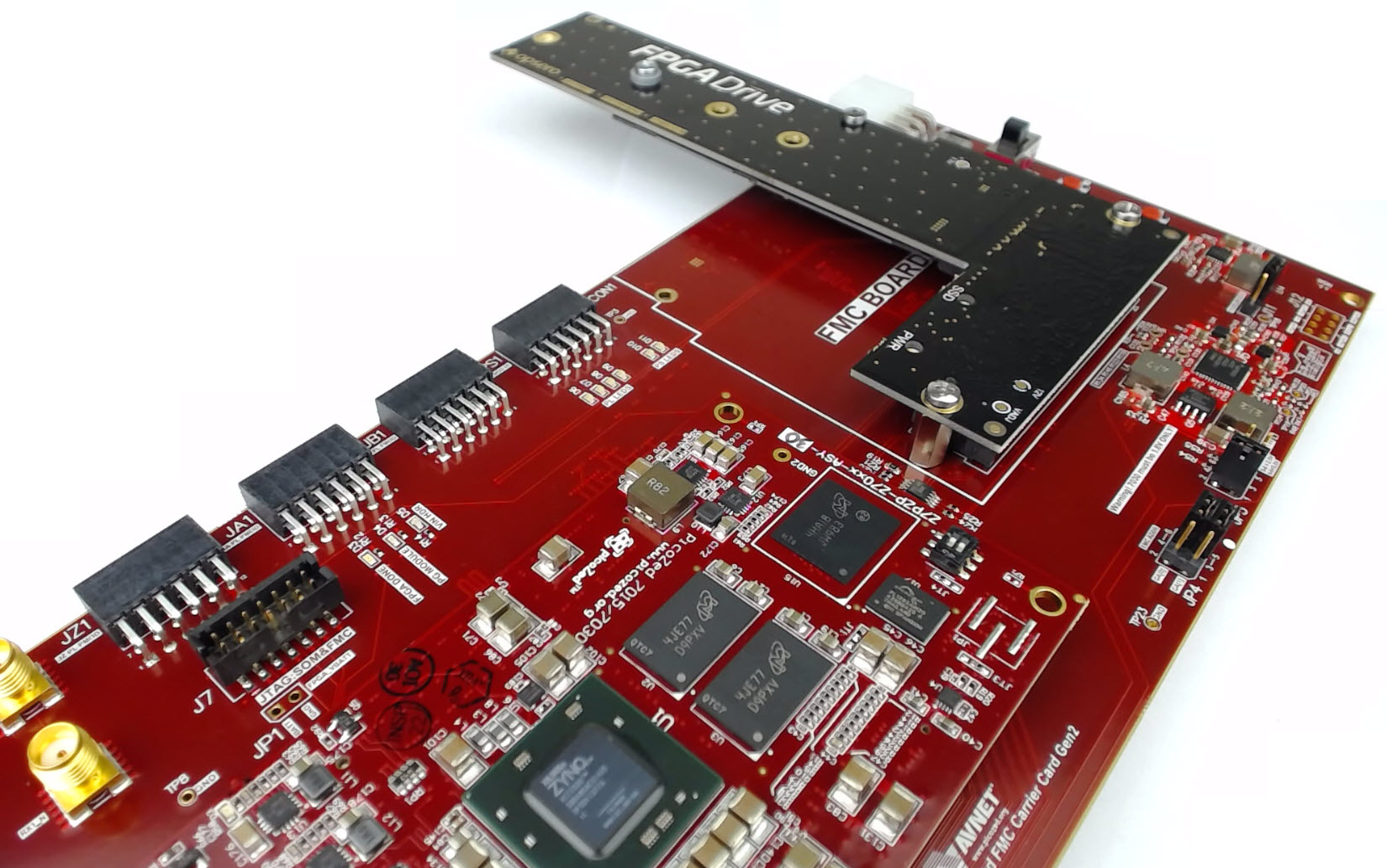

Probably the most common question that I receive about our SSD-to-FPGA solution is: what are the maximum achievable read/write speeds? A complete answer to this question would require a whole other post, but instead for today I’m going to show you what speeds we can get with a simple but highly flexible setup that doesn’t use any paid IP. I’ve run some simple Linux scripts on this hardware to measure the read/write speeds of two Samsung 970 EVO M.2 NVMe SSDs. If you have our FPGA Drive FMC and a ZCU106 board, you will be able to download the boot files and the scripts and run this on your own hardware. Let’s jump first to the results.

[Read More]